Patrick McHardy presents his work on a modification of netlink and nfnetlink_queue which is using memory map.

One of the problem of netlink is that netlink uses regular socket I/O and data need to be copied to the socket buffer data areas before being send. This is a problem for performance.

The basic concept or memory mapped netlink is to used a shared memory area which can be used by kernel and userspace. A ring buffer is set and instead of copying the data, we just move a pointer to the correct memory area and the userspace reads

It is necessary to synchronize kernel and user spaces to avoid a read on a non significative area. This is done by using a area ownership.

There is a RX and a TX ring and it is thus possible to send packet (or issue verdict via the TX ring). There is few advantages on the TX side, but the possibility to batch verdict by issuing multiple verdicts in one send message.

Backward compatibility with subsystem that does not support this new system is done via a standard copy and message sending and receiving.

Ordering of message was a difficult problem to solve, reading in the rings depends on the allocation time in the ring and not on the arrival date on the packet. It is thus possible to have unordered packet in the ring. To fix this, userspace can specify it cares about ordering and the kernel will then do reception of packet and copy atomically.

Multicast is currently not supported. The synchronisation of data accross clients is a big issue and most of the solution will have bad performance.

Userspace support has been done in libmnl. As usual with Patrick, the API looks clean and adding support for it in

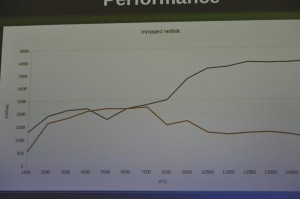

Testing has been done only done on a loopback interface because Patrick did not have access to a 10Gbit test bed. This is a bad test case because loopback copy is less expensive and thus performance measurement on real NICs should give better result.

Anyway, the performance impact is consequent: between 200% and 300% bandwidth increase dependings on the packet size:

There is currently no known bugs and the submission to netdev should occurs soon.