Introduction

During Nefilter Workshop 2008, we had an interesting discussion about the fact that NFQUEUE is a terminal decision. This has some strong implication and in particular when working with an IPS like suricata (or snort-inline at the time of the discussion): the IPS must received all packets routed by the gateway and can only issue a terminal DROP or ACCEPT verdict. It thus take precedence over all subsequent rules in the ruleset: any ACCEPT rules before the IPS rules will remove packets from IPS analysis and in the other way, any decision after the IPS rules will be ignored.

IPS rule placement

First question is where to put the IPS rules. From a Netfilter point of view, the NFQUEUE rule for suricata should be put as first rule of the FORWARD filter chain but this is not possible as NFQUEUE is a terminal rule. A classic trick is to use PREROUTING mangle to put the rule in but this is not a good choice as destination NAT has not yet been done: The IPS will not be able to see the real target of packet and will then not be able to use things like OS or server type declaration. Thus, the current best-bet decision seems to use FORWARD mangle.

This solution is not solving the main issue, ACCEPT can be used in the mangle table and thus having a NFQUEUE rules in the last place will not work. Here, we’ve got two possibilities:

- We can modify the rules generation

- Global filtering is done by an independant tools

IPS rule over independant rules generation system

The NFWS 2008 was covering the second case and Patrick McHardy has proposed an interesting solution. It is not very well known but a NFQUEUE verdict can take three values:

- NF_ACCEPT: packet is accepted

- NF_DROP: packet is dropped and send to hell

- NF_REPEAT: packet is reinjected at start of the hook after the verdict

Patrick proposes to use the NF_REPEAT feature: the IPS rule is put in first place and the IPS issues a NF_REPEAT verdict instead of NF_ACCEPT for accepted packets. As NF_REPEAT will trigger a infinite loop, we need a way to distinguish the packet that have already been treated by the IPS. This can easily be done by putting a mark on the packet during the verdict. This feature is supported by NFQUEUE since its origin and the IPS could do it easily

(the only condition for this solution is to be able to dedicate one bit of the mark to this system).

With this system, the necessary rule to have suricata intercept packet is the following:

iptables -A FORWARD ! -i lo -m mark ! --mark 0x1/0x1 -j NFQUEUE

Here, we’ve got mark and mask set to 1. By adding this simple rule at the top of the FORWARD filter chain, we obtain a ruleset which combine easily the inspection of all packets in the IPS with the traditional filtering ruleset.

I’ve sent a patch to modify suricata in this way. In this new nfq.repeat_mode, suricata issues a NF_REPEAT verdict instead of a NF_ACCEPT verdict and put a mark ($MARK) with respect to a mask ($MASK) on the handled packet.

Building an IPS ready ruleset

The basic

Let suppose now we can modify the rule generation system. We thus have all flexibitlity needed to buid a custom ruleset which combine the filtering and the IPS task.

Let’s recall our main target: they want the IPS to analyse all packets going through the gateway. By analysing this in the Netfilter scope, we could formulate this in this way: We want to send all packets which are accepted in the FORWARD to the IPS. This is equivalent to replace all the ACCEPT verdict by the action of sending the packet to the IPS. To do this we can simply use a custom user chain:

iptables -N IPS

iptables -A IPS -j NFQUEUE --queue-balance 0:1

Then, we replace all ACCEPT rules by a target sending the packet to the IPS chain (use -j IPS instead of -j ACCEPT).

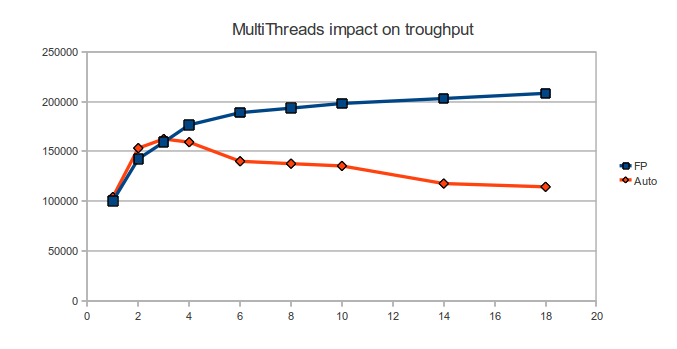

Note: Some reader will have seen that I’m using in the NFQUEUE rules the queue-balance option which specifies a range of queue to use. Please note that packets from the same connections are sent to the same queue and that, at the time fo the writing, Suricata has patches which are currently under review and wich add multiqueue support.

The interest of using a custom chain is that we can defined things like exception or special treatment in the chain. For example, to ignore a specific computer (1.2.3.4 in the example), we can do:

iptables -I IPS -d 1.2.3.4 -j ACCEPT

An objection

Some may object that we don’t get every packets because we send only accepted packets to the IPS. My answer is that this not the IPS role to treat this ones. This is the role of the firewall to send alert on blocked packet. And this is the role of the SIEM to combine firewall logs with IPS information. If you really want all packet to be send to the IPS, then repeat mode is your friend.

Chaining NFQUEUE

One real issue with the setup here described is the handling of multiple programs using the NFQUEUE. The previous method can only applied to one program, as sending to NFQUEUE will be terminal.

Here, we have two solutions. First one is to used the NFQ_REPEAT method on program different than the IPS and using NFQUEUE. The packet will reach after some iteration a -j IPS rule and we will have the wanted result.

An other method is to use the queue routing capabilities of the NFQUEUE. The verdict is a 32 bit integer but only the first 16 bit are used by the verdict. The other 16 bit are used if not null to indicate on which queue the packet have to sent after the verdict by the current program. This method is elegant but requires a support of the feature by the involved programs.